A while back we started getting alerts in the middle of the night on low disk space. A quick investigation led us to one of our ETL tasks.

The ETL task was working on a table that stored binary records we refer to as "dumps". Every night the task was fired to eliminate duplicate dumps, and free up some space.

To find duplicate dumps we used this query:

SELECT

id,

MIN(id) OVER (PARTITION BY blob ORDER BY id)

FROM

dumps

The query groups similar dumps by the blob field. Using a window function we get the ID of the first occurrence of each dump. We later use this query to remove the other duplicate dumps.

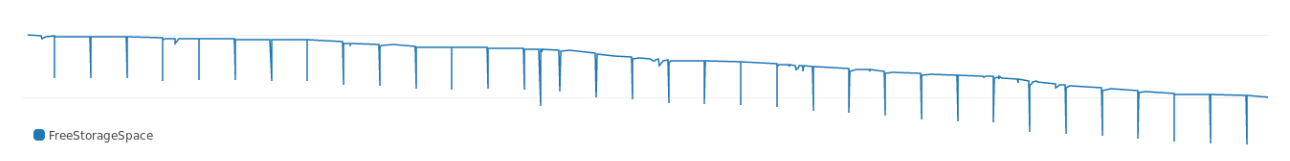

This query took some time to run. The log showed that while it ran, it consumed significant amount of disk space. The following chart shows the big dips in free disk space every night while the query was running:

As time passed the query consumed more and more disk space and the dips got deeper. Looking at the execution plan, the reason for the high disk usage became apparent:

WindowAgg (cost=69547.50..79494.14 rows=497332 width=40) (actual time=107.619..152.457 rows=39160)

Buffers: shared hit=3916, temp read=3807 written=3816

-> Sort (cost=69547.50..70790.83 rows=497332 width=36) (actual time=107.607..127.485 rows=39160)

Sort Key: blob, id

Sort Method: external merge Disk: 30456kB

Buffers: shared hit=3916, temp read=3807 written=3816

-> Seq Scan on dumps (cost=0..8889.32 rows=497332 width=36) (actual time=0.022..8.747 rows=39160)

Buffers: shared hit=3916

Execution time: 159.960 ms

The sort consumes a significant amount of disk space. In the execution plan above from a test dataset, the sort consumed ~30MB of disk space.

Why is This Happening?

PostgreSQL allocates memory for hash and sort operations. The amount of memory is controlled using the work_mem parameter. The default size of work_mem is 4MB. Once a sort or hash operation requires more than 4MB to complete, PostgreSQL will resort to temporary disk space.

Our query is obviously consuming more than 4MB of memory, and this is why we see the database using so much disk space. We decided that before we increase the parameter or add more storage, we should find another way to reduce the amount of space consumed by the sort.

Giving the Sort a Diet

The amount of space consumed by a sort is affected by the size of the dataset and the size of the sorting key. We can't change the size of the dataset, but we can reduce the size of the key.

To establish a baseline, let's see the average size of the sort key:

> select avg(pg_column_size(blob)) from dumps;

avg

----------

780

Each key weighs 780. One way to reduce the size of a binary key is to hash it. In PostgreSQL we can use md5 (yes, it is insecure, but fine for our purposes). Let's see what is the size of the blob hashed using md5:

> select avg(pg_column_size(md5(array_to_string(blob, '')))) from dumps;

avg

-----------

36

The size of a key hashed with md5 is 36 bytes. The hashed key is ~4% the size of the original key, way smaller.

The next step was to try our original query with the hashed key:

SELECT

id,

MIN(id) OVER (

PARTITION BY md5(array_to_string(blob, '')

) ORDER BY id)

FROM

dumps;

And the execution plan:

WindowAgg (cost=7490.74..8469.74 rows=39160 width=40) (actual time=349.394..371.771 rows=39160)

Buffers: shared hit=3916

-> Sort (cost=7490.74..7588.64 rows=39160 width=36) (actual time=349.383..353.045 rows=39160)

Sort Key: (md5(array_to_string(blob, ''::text))), id

Sort Method: quicksort Memory: 4005kB

Buffers: shared hit=3916

-> Seq Scan on dumps (cost=0..4503.40 rows=39160 width=36) (actual time=0.055..292.070 rows=39160)

Buffers: shared hit=3916

Execution time: 374.125 ms

Using the hashed key the query consumed only 4MB of additional disk space. That is ~10% of the previous size of 30MB. This means the size of the sort key has significant impact on the amount of storage consumed by a sort.

Extra Credit

In the example above we used md5 to hash the blob. Hashes generated with MD5 are supposed to be 16 bytes. However, using md5 we get bigger output size:

select pg_column_size( md5('foo') ) as md5_size

md5_size

-------------

32

The size of the hash is exactly double the size we expected. This is because md5 provides the hash as text represented in hexadecimal.

There is another way to hash with MD5 in PostgreSQL using the pgcrypto extension. pgcrypto can produce MD5 as bytea (binary):

create extension pgcrypto;

select pg_column_size( digest('foo', 'md5') ) as crypto_md5_size

crypto_md5_size

---------------

20

The size of the hash is still 4 bytes larger than we expected. This is because the bytea type uses an additional 4 bytes to store the length of the value.

To strip off these 4 bytes, we can resort to some hackery. As it happens, the uuid type in PostgreSQL is exactly 16 bytes, and it supports any arbitrary value. We can use that fact to strip off the remaining 4 bytes:

select pg_column_size( uuid_in(md5('foo')::cstring) ) as uuid_size

uuid_size

---------------

16

And there you have it. From 32 bytes using md5, down to 16 bytes using uuid.

To check the impact of this change I had to use a bigger dataset than the one I used in this article. Since I can't share the actual data, I'll only share the results:

| expression | size | disk used |

|---|---|---|

blob |

780 | 309MB |

md5(blob) |

36 | 11MB |

uuid_in(md5(blob)) |

16 | 7MB |

As the table above shows, the original query which caused us grief used 300MB of disk space (and woke us up at night). After we changed the query to use a uuid key, the sort used only 7MB of disk space.

Additional Considerations

The query with the hashed sort key runs much slower, even though it consumes less disk space:

| query | run time |

|---|---|

| blob | 160ms |

| hashed blob | 374ms |

Hashing utilizes more CPU. This caused the query with the hash to be slower. In our case, we were trying to solve a disk space problem. The task ran at night so we weren't concerned with the execution time. We were willing to make this compromise to solve the disk space problem.

This case was a good reminder that tuning a database is not only about making queries run faster. It's all about balancing the resources at our disposal and doing as much as possible with as little as possible.