In almost every project we work on, we use Django admin for support and operations. Over time we experienced an influx of new users and the amount of data we had stored grew rapidly. With a large dataset we started to experience the real cost of some Django admin features.

I often talk about making Django scale but what does it actually mean? It means getting consistent performance regardless of the amount of data. Over the past few years I've written about different approaches to scale and optimize Django admin for large tables:

- Things You Must Know About Django admin As Your App Gets Bigger: Included an overview of built-in options to optimize for larger tables.

- How to Add Custom Action Buttons to Django Admin: Explained how to make Django admin more suitable for operations.

- How to turn Django Admin into a lightweight dashboard: Showed how to add custom pages to the admin with fancy graphs and chart. This was our first dashboard (and we still use it!).

- 5 ways to make Django Admin safer: Described different measures we took to make our admin more secure (this one also inspired a package).

- Scaling Django Admin Date Hierarchy: Identified issues with the date hierarchy and suggested ways to alter the admin behavior to better handle large tables (we also turned it into a package!).

- Django Admin Range-Based Date Hierarchy : Looked at the queries generated by the date hierarchy and made them much faster. I'm especially proud of this one because I managed to get it into Django 2.1.

- How to add a text filter to Django Admin: We added a new type of filter to save some time when generating list filters.

After all of this, only one problem remained...

The Paginator

The last nail in Django admin's scalability coffin is the paginator:

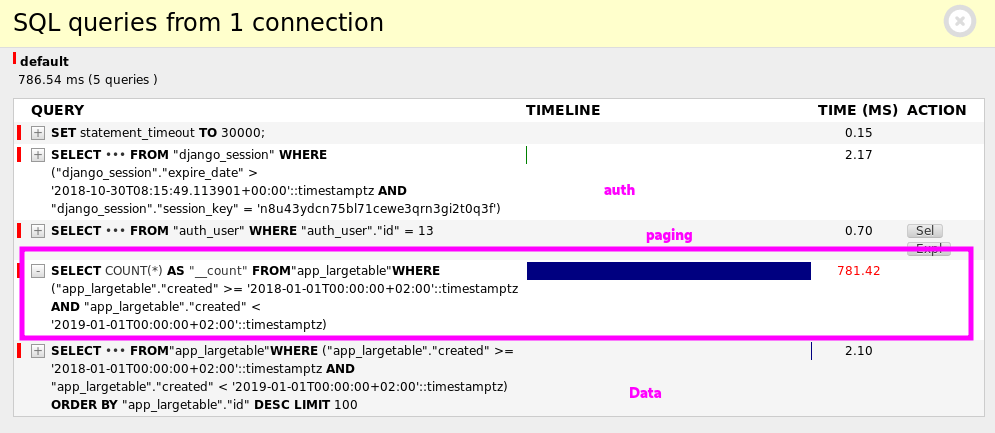

Django spent 781ms out of 786ms just to count the rows in the table. That's ~99.4% of the time, just for the paginator!

For reference, the actual query used to fetch the data took only 2.10ms. The reason it's much quicker is that it only needs to fetch one page (notice the LIMIT 100).

What Can We Do?

Making something scale is all about eliminating operations that work on the entire dataset. The paginator has to count the rows to determine how many pages there are. This forces every single page in the admin to scan the entire table.

As the table grows in size this query takes longer and longer to execute. The size of the table has a direct impact on the load time of a single page and this is exactly what we want to avoid.

Django ModelAdmin provides a way to override the paginator.

Our initial thought was to implement an entirely different type of paginator - one that doesn't calculate the number of pages, but only shows links to the previous and next pages.

Unfortunately, the paginator is embedded deep into Django admin - Django uses partial templates to render the paginator. This makes it difficult to create a paginator that doesn't count pages.

To provide a different paginator to a ModelAdminwe need to implement a Paginator. The interesting function in the paginator implementation is count:

# django/core/paginator.py

@cached_property

def count(self):

try:

return self.object_list.count()

except (AttributeError, TypeError):

return len(self.object_list)

This is where the horror happens. Django counts the rows in the queryset (or the object list) and caches the result.

If we want to eliminate the count this is where we need to start.

A Dumb Paginator

Let's take the simplest approach and create a paginator that returns a very large number without actually counting the rows:

# common/paginator.py

from django.core.paginator import Paginator

class DumbPaginator(Paginator):

"""

Paginator that does not count the rows in the table.

"""

@cached_property

def count(self):

return 9999999999

Let's add it to a ModelAdmin of a large table and see what happens:

# app/admin.py

from common.paginator import DumbPaginator

@admin.register(models.LargeTable)

class LargeTableAdmin(admin.ModelAdmin):

paginator = DumbPaginator

And the admin page:

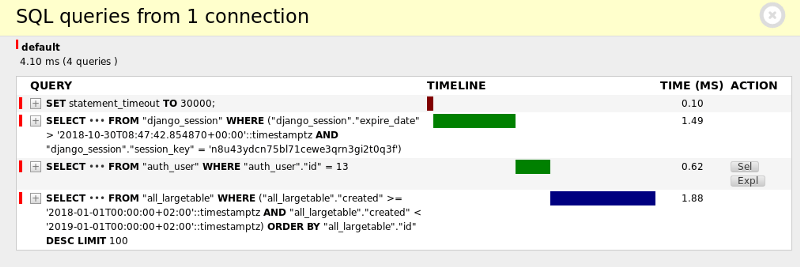

Wow! With the dumb paginator the page now loads in only 4ms. This is impressive but how does the UI looks like?

We made the paginator think there are 9999999999 results so this is what it shows. Clicking on a page that doesn't exist will open an empty list view.

Getting Creative

We saw that we can provide a custom pagination and eliminate the count. We also know that Django is very attached to this specific paginator template so we can't easily replace it.

At this point it's obvious that if we don't want to work too hard and alter Django's templates, we need to make a compromise. A compromise can be made either in the UI or in accuracy.

I personally don't like to sacrifice accuracy so fast so I tend to make compromises in the UI. Especially in internal projects such as ones implemented with Django admin.

Instead of always eliminating the pagination, what if we just limit the execution time of the count query:

# common/paginator.py

from django.core.paginator import Paginator

from django.db import connection, transaction, OperationalError

class TimeLimitedPaginator(Paginator):

"""

Paginator that enforces a timeout on the count operation.

If the operations times out, a fake bogus value is

returned instead.

"""

@cached_property

def count(self):

# We set the timeout in a db transaction to prevent it from

# affecting other transactions.

with transaction.atomic(), connection.cursor() as cursor:

cursor.execute('SET LOCAL statement_timeout TO 200;')

try:

return super().count

except OperationalError:

return 9999999999

We let the original paginator count the rows. If the count takes longer than 200ms, the database will kill the query, an OperationError will be raised and we return the fake value. If the count takes less than 200ms it will work as usual.

To check the new approach we set the paginator in the ModelAdmin:

# app/admin.py

from common.paginator import TimeLimitedPaginator

@admin.register(models.LargeTable)

class LargeTableAdmin(admin.ModelAdmin):

paginator = TimeLimitedPaginator

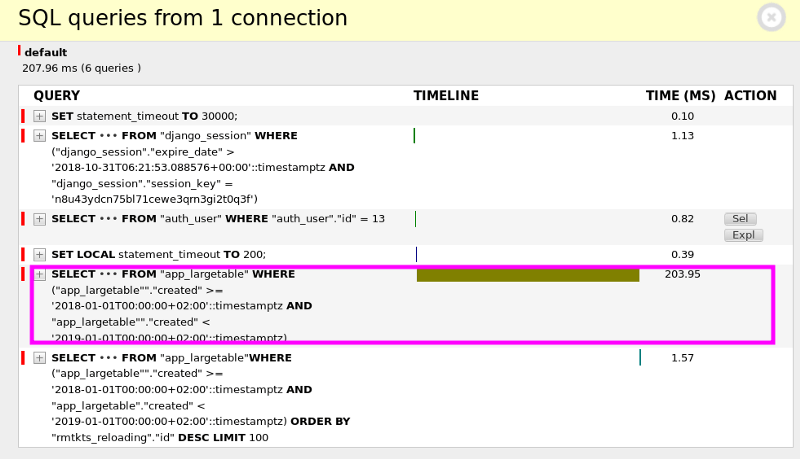

First let's try it with a big result set. For example, all the rows from year 2018:

Query took longer than 200ms and was killed.

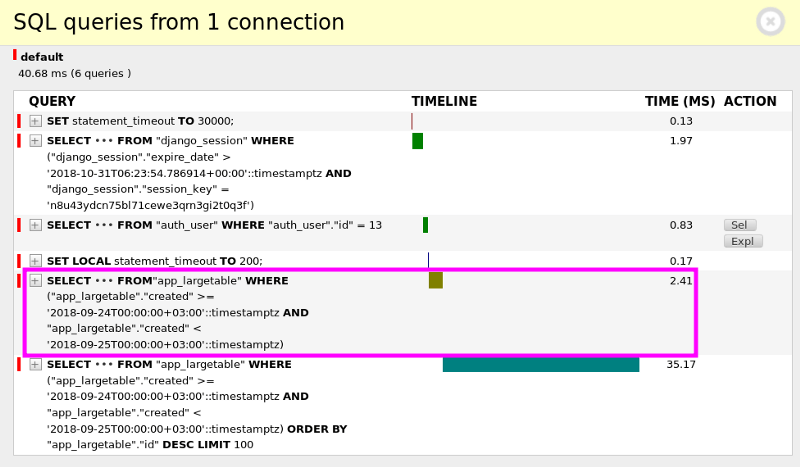

Let's try a shorter period. For example, only records from a single day in 2018:

Count took only 2.41ms. It was not killed and we got the regular "paginator" - exactly what we wanted!

source code

The complete code for TimeLimitedPaginator can be found in this gist.

Other Approaches

As discussed in the previous section, compromises can be made in different ways. Some ideas I encountered when I researched this issue were:

- Estimate the number of rows based on the database execution plan - in Django 2.1 there is even a new explain function on

QuerySet. This approach is very inaccurate, especially with complicated predicates, and might yield unexpected results. - Cache the number of rows - the boring and unimaginative solution. As always with a solution that involves caching, you need to decide when to invalidate the cache which is a problem of it's own.

- Completely replace Django's paginator - replacing templates and possibly making adjustments to the change list itself.

I went down this road initially but gave up when I realized it will be difficult to make this "plug and play". I do believe that a proper solution should let users replace the pagination implementation to something like cursor pagination (pagination that does not need to evaluate the entire dataset).